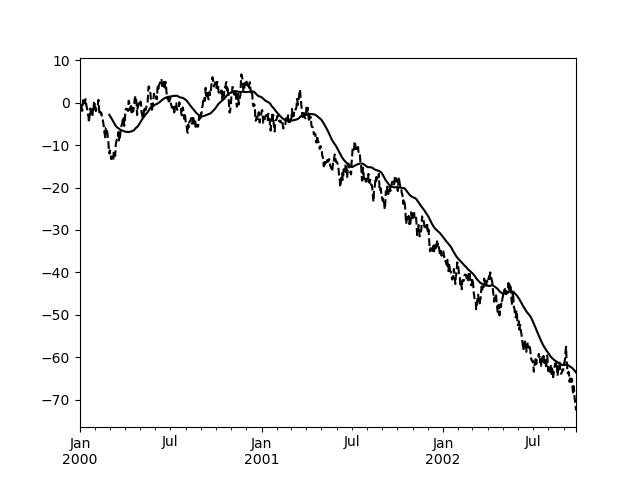

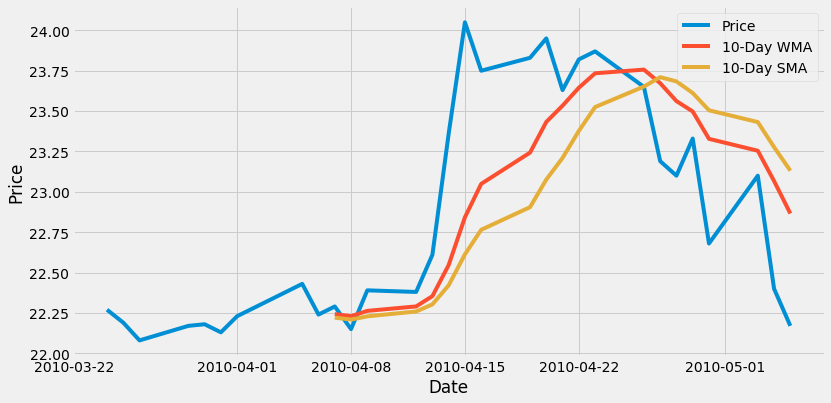

MAE is the sum of the absolute residuals over the number of points, and MSE is the sum of residuals squared over the number of points as well. this is often the first metric data scientist go to when evaluating their model. Here the residuals are plotted and show us how good of a fit our model is. R-squared tells us the proportion of variance of the dependent variable that is explained by the regression model. R-squared was also discussed when analyzing relationships between 2 or more variables. Regression techniques : r-squared ( coefficient of determination), the mean absolute error (MAE) and the mean squared error (MSE). This is intuitive since we are trying to predict the rain for tomorrow! Evaluating models Here you can see the the second variable, Humidit圓pm was much more important to our outcome than humidity from that morning. Since our features were normalized beforehand, we can look at the magnitude of our coefficients to tell us the importance of each independent variable. Other noteworthy functions include predict, ravel for data preparation Exercise : Linear Regression Here it accurately identified around 85% of the observations in the test set. We can also print accuracy to see how our model performed : Note that these are only interpretabel when you normalize your data first, since you can’t draw any conclusions based on their magnitudes otherwise. Since we use 2 independent variables,we got two coefficients back). Similar to linear regression, we can implement logistic regression and then fit the model to our data.įrom sklearn.linear_model import LogisticRegression It gives us an S-shaped curve that takes any real number and maps or converts it between 0 and 1.

The sigmoid function is also called the logistic function. This allows us to compute probabilities that each observation belong to a class, thanks to the sigmoid function. While linear gives us a continuous output, logistic regression produces a discrete output. Logistic regressionĪnother regression technique is logistic regression, one of the most common Machine Learning techniques for two-class classification. It is essentially the slope of the line and tells us that for every O.8 units of the dependent variable, we get 1 unit of the independent variable.

Since we have only one independent variable in this example, there is only one coefficient. To implement linear regression in python, we’ll call on the scikit-learn package.įrom sklearn.linear_model import LinearRegressionĪfter creating the linear regression object and changing any default parameters, simply call the fit function to create your model. Note that sometimes you will only see the linear component of our intercept and slope, without the random error component. More variables can be included by simply adding a beta coefficient for each additional factor.

#PYTHON EXPONENTIALLY WEIGHTED STANDARD DEVIATION PLUS#

This is calculated by taking the y-intercept plus our population slope coefficient, times the independent variable, X, plus our random irreducible error term. We are solving for the Y-value or the dependent variable, which is our output. This results in a fit that will look similar to the plot. Simple linear regression involves one independent and one independent variable with a linear relationship. In order to effectively leverage regression models we need the true relationship of the variables to be linear, the errors to be normally distributed and homoscedastic ( uniform variance & each observation to be independent ) Linear regression (most common techniques – linear and logistic regression ) More concretely, it’s a way to determine which variables have an impact, which don’t, which factors interact, and how certain we are about this. Regression is a technique used to model and analyze the relationships between variables contribute to producing a particular outcome. Regression and Classification Regression models Getting started